Program Report: Industrial Organization, 2017

The Program on Industrial Organization (IO) was founded in 1990, and grew steadily under the leadership of Nancy Rose, who led the program from its inception until 2014. Jonathan Levin served as program director from 2014 until 2016, when he became dean of the Stanford Graduate School of Business and was succeeded by Liran Einav. The IO program currently has 83 members and holds a winter meeting on the West Coast and a summer meeting during the NBER Summer Institute. Program meetings were known for many years for their nontraditional "discussant presents" format, although in recent years they have more often adhered to the traditional "author presents" model.

Researchers in the Program on Industrial Organization (IO) study consumer and firm behavior, competition, innovation, and government regulation. This report begins with a brief summary of general developments in the last three decades in the range and focus of program members' research, then discusses specific examples of recent work.

When the program was launched in the early 1990s, two developments had profoundly shaped IO research. One was development of game-theoretic models of strategic behavior by firms with market power, summarized in Jean Tirole's classic text-book.1 The initial wave of research in this vein was focused on applying new insights from economic theory; empirical applications came later. Then came development of econometric methods to estimate demand and supply parameters in imperfectly competitive markets. Founding program members including Timothy Bresnahan,2 Ariel Pakes,3 and Robert Porter4 played a key role in advancing this work.

Underlying both approaches was the idea that individual industries are sufficiently distinct and industry details sufficiently important that one needs to focus on specific markets and industries in order to test specific hypotheses about consumer or firm behavior, or to estimate models that could be used for counterfactual analysis, such as analysis of a merger or regulatory change. The econometric developments in the field, which emphasized structural modeling of demand and supply, ran somewhat counter to the trend in other fields toward the search for natural experiments to illuminate the causal effects of policy changes.

There were, to be sure, some points of overlap with neighboring fields. A notable example was the role that industrial organization economists played in the activities of the NBER's Program on Productivity, Innovation, and Entrepreneurship (PRIE), where the research agenda embraced the estimation of plant-level costs and productivity and the effects of firm and market characteristics on R&D spending and the rate of innovation.

In the last decade, the scope of program members' research has broadened to encompass more industries and new topics. While studies of traditional manufacturing, service, and retail settings remain an important focus, there has been a rapid growth of research on sectors such as health care,5 education,6 financial markets,7 and the media.8

Expanding the Scope of Research

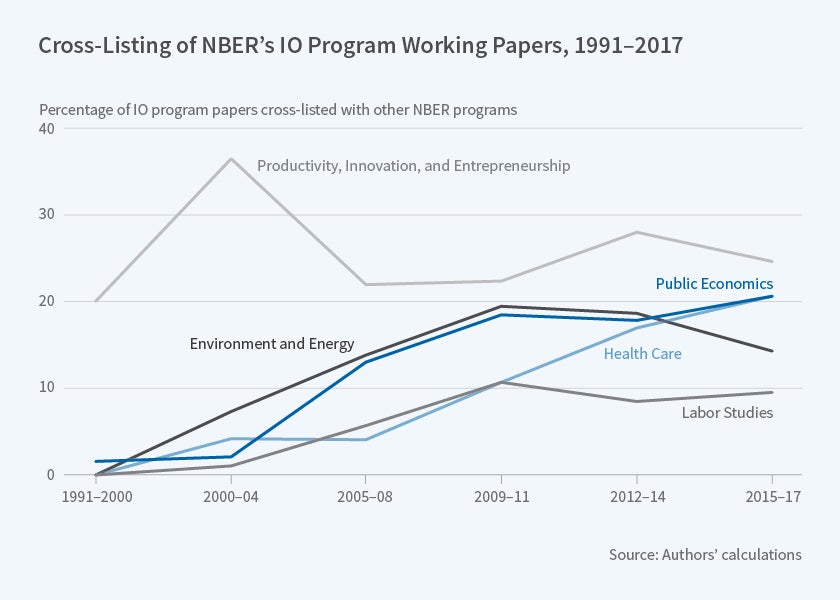

A nice way to illustrate the increase in the breadth of IO research is to examine the rate at which IO program members cross-list their papers with other NBER programs. We analyzed all NBER working papers since 1990 on which at least one author was an IO program affiliate, then computed the share of these papers that were cross-listed with another program. We considered only programs in which at least 5 percent of the papers by IO researchers were cross-listed

Figure 1 plots our findings. It shows an interesting evolution of cross-listing behavior in the last 15 years. While productivity remains a nontrivial focus of work in IO, there has been a remarkable increase in the share of IO papers cross-listed in other fields of applied microeconomics. This started in the early 2000s in the context of environmental regulation and energy — especially electricity — markets, and continued in the last decade with a sharp rise in research on health care markets, insurance markets, labor markets, and on topics that overlap with public economics. While the cross-listing rate with programs other than PRIE was nearly zero in the program's first decade, today nearly 20 percent of IO program papers are cross-listed with Public Economics, 20 percent with Health Care, 15 percent with Environment and Energy Economics, and 10 percent with Labor Studies. We think that two general forces have contributed to this new pattern, which in keeping with the program's emphasis one may label as supply and demand.

On the supply side, econometric methods for studying imperfect completion have matured: From initial "test cases" using retail scanner data to estimate demand and supply for consumer products such as breakfast cereal and other grocery items, these methods increasingly are applied to more complex products such as health insurance, primary schooling, consumer loans, media consumption, and financial products. The explosion of available data from private sector firms and markets has paralleled and facilitated this expansion.

On the demand side, there has been a large shift in many markets, such as electricity and health care, toward regulated competition. Some of these changes have grown out of changes in U.S. regulatory structure which, starting in the 1980s, prioritized private sector competition as the favored approach to improve efficiency and foster innovation. At the same time, there has been an increasing appreciation of the importance of market power in a wide range of industries, such as health care, financial services, retailing, and media. Indeed, these changes continue to be some of the most significant in the U.S. economy, suggesting bright prospects for the relevance and importance of industrial organization research in coming years.

Examples of Recent Research

To illustrate the broadening of research by industrial organization economists, we now summarize several specific papers. We have chosen these examples to underscore the broadening spectrum of industries and topics addressed by program members and the variety of approaches and tools being used to study competition and markets. These examples are not meant to be a summary of the much broader scope of research by program affiliates. All of the recent working papers by program affiliates may be found at www.nber.org/papersbyprog/IO.html This body of research includes large swaths of work on trade, media, political economy, and energy, as well as traditional competition policy, innovation, and regulation topics.

Competition in Health Insurance Markets

The U.S. health care system increasingly revolves around regulated health care markets. Today, 11 million Americans are enrolled in health plans through Affordable Care Act (ACA) exchanges, 17 million in Medicare Advantage plans, 55 million in managed Medicaid plans, and 41 million in Medicare Part D plans. In each case, private insurers compete under market rules that regulate contract features, pricing, and risk adjustment. Larger employers frequently also sponsor health plan choice, again creating an environment of managed competition. These developments raise important questions about market power, market design, and asymmetric information.

Competition has been a central concern because health insurance markets are heavily concentrated. In the California Health Insurance Exchange, four insurers have 95 percent of the market. Insurer concentration is even higher in many state exchanges and Medicare Advantage regions. In traditional markets, market power raises consumer prices. This point is sometimes contested in health insurance markets because hospitals and health care providers similarly enjoy considerable market power, and a dominant health insurer may enjoy the ability to negotiate favorable prices, lowering costs for consumers.

Many recent papers by IO program members have studied this situation. For example, Kate Ho and Robin Lee examine health plan choice sponsored by CalPERS for California's roughly 1.2 million state employees.9 Using data on plan choices, medical claims, and prices insurers pay to hospitals, they develop an econometric model of hospital-insurer bargaining, premium setting, plan choice, and health care utilization, and simulate the effect of having fewer insurers.

Their analysis highlights the importance of both traditional market power and bargaining power following a hypothetical merger. Holding hospital prices fixed, a merger raises consumer premiums, but in some markets, greater leverage in bargaining not only counteracts this direct effect but leads to overall lower consumer prices. Ho and Lee show how the magnitude of the competing effects varies across cities and market configurations.

Another study, by Benjamin Handel, Igal Hendel, and Michael Whinston, examines a key issue in the ACA exchanges, again from a quantitative perspective.10 Their research, which recently received the Econometric Society's Frisch Medal, focuses on the costs and benefits of "community rating," under which insurers are not allowed to charge differential premiums based on health status. Community rating protects individuals with pre-existing conditions, and in a forward-looking sense protects healthy individuals who might in the future become sick, insuring them against what is sometimes called "reclassification risk." However, it also creates the potential for adverse selection if healthy people opt out to avoid paying high premiums, or choose stripped-down plans. Much of the debate around the ACA has centered on these dynamics and how best to address them.

Handel, Hendel, and Whinston develop an elegant model that allows them to study this situation empirically, combining the classic adverse selection theory with detailed plan choice and claims data from a large private employer to estimate the key demand and supply parameters. Among many interesting findings, their results suggest that higher-income employees would do better under health-based pricing, although not by that much, while community rating, as under the ACA, is hugely important for lower-income workers or for workers on something resembling a fixed income, which may be more representative of the current mix of ACA enrollees.

Both of these studies illustrate the power of using quantitative models. The theoretical trade-offs are well understood, but there is no clear idea of which effect is more important, so detailed data and an econometric model can help.

Financial Market Microstructure

The design of market institutions and the potential for market failures resulting from design choices have been major themes at recent IO program meetings. Our second example is drawn from financial markets and again illustrates the breadth of industry focus among NBER IO members and the diversity of methodological approaches.

The last 15 years or so have seen a big shift in financial markets toward electronic trading. One of the phenomena associated with this has been the emergence of high-frequency trading and the associated race for speed, with large financial firms making large investments in network infrastructure to procure a speed advantage in getting their orders to the electronic exchange.

Eric Budish, Peter Cramton, and John Shim have studied this development and analyzed the potential consequences of shifting from continuous trading to trading in discrete, albeit closely spaced, intervals.11 They use a striking example to demonstrate the arbitrage opportunities created for high-frequency traders (HFTs) in current markets. The example involves two contracts that track the S&P 500, an exchange-traded fund (ETF) that trades in New York and a futures contract that trades in Chicago. The securities move together with near-perfect correlation on a second-by-second time scale. But at a finer resolution of milliseconds the correlation breaks down, because when there is a trade on one contract that moves its price up or down, it takes several milliseconds for quotes on the other contract to adjust. During that interval, an arbitrage opportunity exists and, with sufficient speed, a trader may be able to see a trade in one market and execute a trade against a "stale" quote in the other market.

Remarkably, the time for these arbitrage gaps to close has narrowed dramatically as firms have invested in increasingly fast communication technology, but the dollar magnitude of the opportunities has remained constant. The reason is that if the price in Chicago ticks up one index point, and the trader's buy order gets to New York before the price change, the profit is one index point, regardless of how fast this happens. So the incentive to be fastest does not go away as everyone gets faster.

Budish, Cramton, and Shim develop a simple model to analyze this speed race in public equity markets and organize the empirical facts described above. In their model, HFTs endogenously play two roles. First, they compete to create liquidity — to post bids and asks — which is good for regular traders. Second, they compete to "snipe" stale quotes, which creates a problem for people who post bids and asks and leads to wider bid/ask spreads and reduced market liquidity. The researchers argue that the problem is not HFTs per se. Rather, it is the market rules that foster competition on speed by prioritizing trades based on their arrival time rather than their price.

They analyze alternative market design rules and suggest that moving from continuous time trading to what they call frequent batch auctions (auctions that run at frequent, fixed intervals — for example, every tenth of a second) might improve the efficiency of public equity markets. This possibility has attracted attention from the Securities and Exchange Commission and other regulators.

Digital Advertising

Researchers have become increasingly interested in the nature of competition and the determinants of firm behavior in the digital economy. One example of research in this area concerns the market for internet search advertising. Internet advertising is among the fastest-growing industries, with search advertising revenues of approximately $37 billion in 2017. Google and Facebook have become two of the world's largest companies on the strength of their advertising sales.

Relative to traditional advertising, such as television commercials, a common argument for internet advertising, and especially search advertising, is that it solves the fundamental problem in the industry — the problem that half the money is wasted and no one knows which half. Internet advertising, so the argument goes, can be measured and targeted. As the industry has grown, researchers have focused on trying to assess just how much value is created in digital advertising, how effective it is in swaying people's behavior, and how any resulting surplus is divided between consumers, advertisers, and internet platforms.

One paper that illustrates recent research in this area is by Thomas Blake, Chris Nosko, and Steven Tadelis.12 Their study is also an example of a recent trend in the field toward working with private companies — sometimes to get access to their data, in other cases to run experiments. This project got started when Tadelis and Nosko were on leave at eBay and Blake was a full-time economist there, with the project presumably generating value (or at least interest) for eBay, while also being of significant academic interest.

The study begins with the observation that it is not necessarily easy to measure the value of search advertising. The researchers illustrate this point by making a distinction between "non-branded search" and "branded search." In the first case, a consumer may search for, say, a guitar, and search ads may direct him or her to specific sellers. In the second case, if the consumer searches for, say, "Macy's," he or she may see Macy's advertising in response to this search, although it seems natural to conjecture that he or she would have ended up on Macy's website even without seeing the ad. But a naïve data analysis may suggest that Macy's ad is incredibly successful because many people are likely to click on the ad and get to Macy's.

To study this question, Blake, Nosko, and Tadelis design and report on a large-scale experiment they ran in collaboration with eBay in which they shut down advertising for 30 percent of the company's U.S. internet traffic for two months and measured the results. They first experiment by shutting down advertising against the keyword "eBay." As may have been conjectured, shutting down "branded search ads" makes little difference. Without the ad, users simply click on the organic search result and find their way to the eBay website, and the overall number of clicks on the site remains essentially constant.

They then go to the non-branded search advertising, and shut down eBay advertising for generic keywords such as "guitar" in randomly selected geographic areas in the United States. The overall effect is not zero, but it is small. The authors break down the estimated impact by frequency and recency of the user (how many times and how recently they have visited eBay), and show that search advertising for eBay is effective when the ads are shown to users who are not eBay shoppers already, or who have not been to eBay in a long time. Such users account for a relatively small share of the overall volume, explaining the small aggregate effect. Although many advertisers on Google are not well known to searchers, most people are so aware of eBay, and potentially of other large advertisers, that they don't need Google to find it.

Endnotes

T. Bresnahan and P. Reiss, "Entry and Competition in Concentrated Markets," Journal of Political Economy, 99(5), 1991, pp. 977-1009.

S. Berry, J. Levinsohn, and A. Pakes, "Automobile Prices in Market Equilibrium," NBER Working Paper 4264, January 1993; and Econometrica, 63(4), 1995, pp. 841-90.

R. Porter, "The Role of Information in U.S. Offshore Oil and Gas Lease Auctions," NBER Working Paper 4185, October 1992, and Econometrica, 63(1), 1995, pp. 1-27.

K. Ho and R. Lee, "Insurer Competition in Health Care Markets," NBER Working Paper 19401, September 2013; and Econometrica, 85(2), 2017, pp. 379-417; B. Handel, I. Hendel, and M. Whinston, "Equilibria in Health Exchanges: Adverse Selection vs. Reclassification Risk," NBER Working Paper 19399, September 2013, and Econometrica, 83(4), 2015, pp. 1261-1313.

D. Deming, J. Hastings, T. Kane, and D. Staiger, "School Choice, School Quality and Postsecondary Attainment," NBER Working Paper 17438, September 2014, and American Economic Review, 104(3), 2014, pp. 991-1013; N. Agarwal and P. Somaini, "Demand Analysis using Strategic Reports: An Application to a School Choice Mechanism," NBER Working Paper 20775, December 2014, and Econometrica, forthcoming.

E. Budish, P. Cramton, and J. Shim, "The High-Frequency Trading Arms Race: Frequent Batch Auctions as a Market Design Response," presented in the NBER IO Summer 2014 meeting, and Quarterly Journal of Economics, 130(4), 2015, pp. 1547-621; A. Hortacsu, J. Kastl, and A. Zhang, "Bid Shading and Bidder Surplus in the U.S. Treasury Auction System," NBER Working Paper 24024, November 2017, and American Economic Review, forthcoming.

M. Gentzkow and J. Shapiro, "What Drives Media Slant? Evidence from U.S. Daily Newspapers," NBER Working Paper 12707, November 2006, and Econometrica, 78(1), 2010, pp. 35-71; G. Crawford, R. Lee, M. Whinston, and A. Yurukoglu, "The Welfare Effects of Vertical Integration in Multichannel Television Markets," NBER Working Paper 21832, December 2015.

K. Ho and R. Lee, "Insurer Competition in Health Care Markets," NBER Working Paper 19401, September 2013; and Econometrica, 85(2), 2017, pp. 379-417.

B. Handel, I. Hendel, and M. Whinston, "Equilibria in Health Exchanges: Adverse Selection vs. Reclassification Risk," NBER Working Paper 19399, September 2013, and Econometrica, 83(4), 2015, pp. 1261-313.

E. Budish, P. Cramton, and J. Shim, "The High-Frequency Trading Arms Race: Frequent Batch Auctions as a Market Design Response," presented in the NBER IO Summer 2014 meeting, and Quarterly Journal of Economics, 130(4), 2015, pp. 1547-621.

T. Blake, C. Nosko, and S. Tadelis, "Consumer Heterogeneity and Paid Search Effectiveness: A Large Scale Field Experiment," NBER Working Paper 20171, May 2014; and Econometrica, 83(1), 2015, pp. 155-74.