Algorithms, Judicial Discretion, and Pretrial Decisions

The relative performance of data-driven algorithms and human decisionmakers, who are often able to override algorithmic recommendations, is an active subject of study in many settings. In a new study of pretrial release decisions by judges, Algorithmic Recommendations and Human Discretion (NBER Working Paper 31747), researchers Victoria Angelova, Will S. Dobbie, and Crystal S. Yang find a small fraction of judges outperform the algorithm, while most do not.

The researchers analyze data on pretrial decisions made by judges in a US city between October 2016 and March 2020. The city used an algorithm to produce a pretrial misconduct risk score and a release recommendation for each defendant. Judges had access to these scores as well as to a series of reports that detailed the information considered by the algorithm, which included the defendants’ parole/probation status, the number and details of any prior arrests or convictions, and age at first arrest. The judges also had access to other information that was not used in the algorithmic risk score and release recommendation, including demographics, details of the current charges, and aggravating risk factors like mental health status.

When bail judges can overrule the algorithmic recommendation regarding pretrial release, 90 percent underperform the algorithm.

The judges overrode the algorithm’s release recommendations for 12 percent of low-risk defendants for whom the algorithm recommended release and 54 percent of high-risk defendants for whom the algorithm recommended detention. The researchers investigated these override decisions to learn whether the judges disagreed with the algorithm’s implicit assumption of the acceptable risk threshold for pretrial release, or whether they made different assessments of the risk levels of different defendants in light of the information they received. The researchers constructed a counterfactual algorithmic misconduct rate based on the algorithm’s recommendations at each judge’s release rate. For each judge, they then compared these counterfactual rates with the actual pretrial misconduct rates for the defendants who were released, allowing them to assess the impact of allowing judges to exercise their discretion on the accuracy of release decisions.

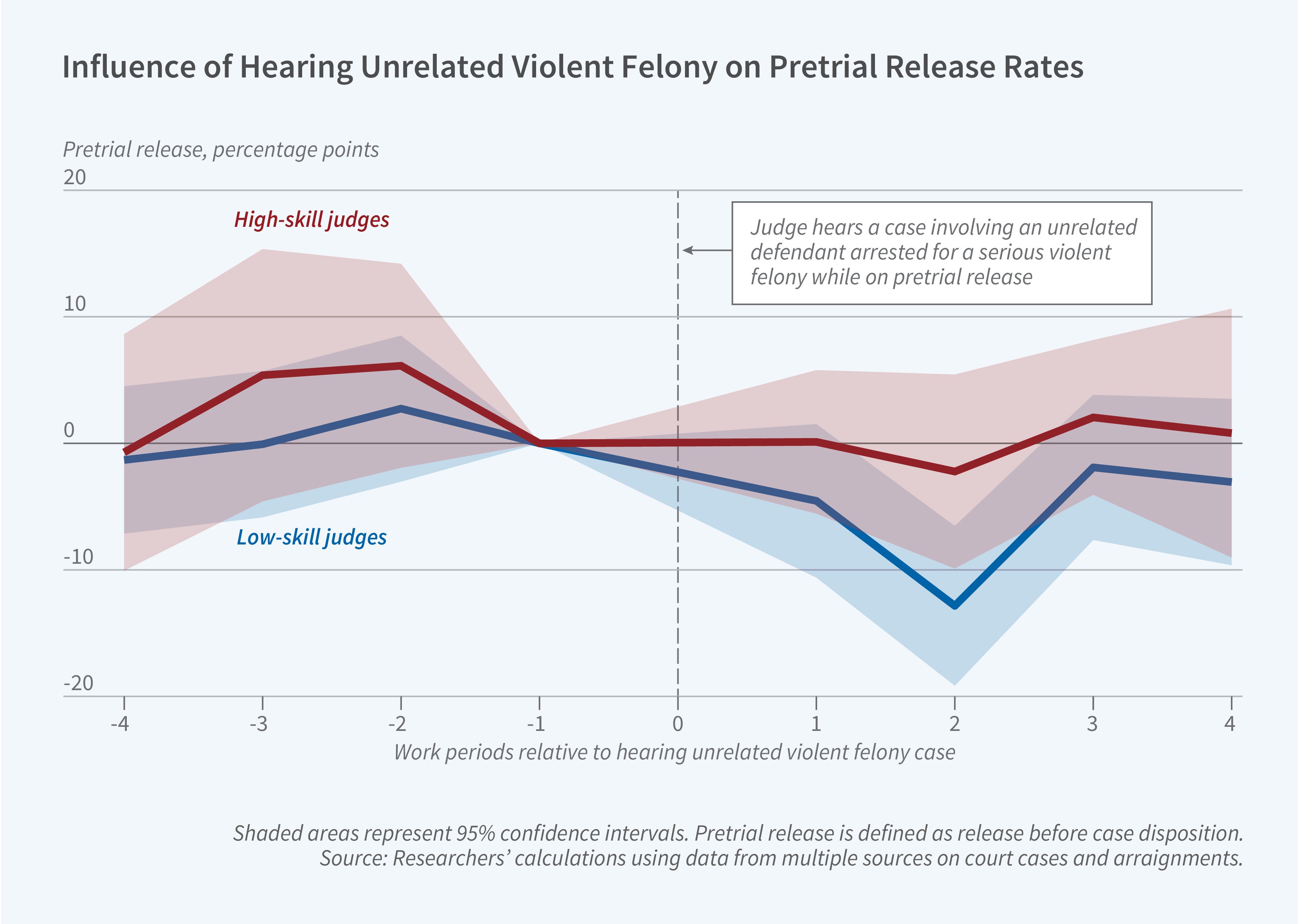

On average, the judges underperform the algorithm with pretrial misconduct rates that are 2.4 percentage points higher than the algorithmic counterfactual at the same release rate. However, there was important heterogeneity across the judges, with approximately 90 percent underperforming the algorithm but 10 percent outperforming it. The two groups of judges had similar demographics, political leanings, and years of experience, but the lower-performing judges were more likely to have a background in law enforcement. They were also more likely to be affected by information extraneous to the case at hand. For example, if, prior to a pretrial release hearing, a low-performing judge heard a case involving an unrelated defendant who was arrested for a serious felony while on pretrial release, the judge’s release rate for other defendants declined in subsequent work periods. High-performing judges did not exhibit this pattern of behavior.

In an original survey conducted by the researchers, lower-performing judges appeared to place greater importance on demographic factors including race, while higher-performing judges instead focused on non-demographic factors not considered by the algorithm like substance abuse status and the ability to pay bail. Consistent with these stated preferences, lower-performing judges were more likely to assign monetary bail while higher-performing judges were more likely to attach nonmonetary conditions, such as drug treatment or counseling, to release. The researchers thus conclude that that the use of valuable private information not considered by the algorithm can make it possible for a skilled human decision-maker and an algorithm working together to outperform the algorithm alone.

— Emma Salomon

This research was funded by the Russell Sage Foundation and Harvard University.